Apace Spark

Basic Interview Q&A

1. What is Apache Spark, and how does it differ from Hadoop?

Apache Spark is an open-source distributed computing framework that provides an interface for programming clusters with implicit data parallelism and fault tolerance. It differs from Hadoop in several ways:

Spark performs in-memory processing which makes it faster than Hadoop's disk-based processing model.

Spark provides a more extensive set of libraries and supports multiple programming languages, whereas Hadoop mainly focuses on batch processing with MapReduce.

2. Explain the concept of RDD (Resilient Distributed Dataset).

RDD stands for Resilient Distributed Dataset, the fundamental data structure in Spark that represents an immutable, partitioned collection of records. RDDs are fault-tolerant, meaning they can recover from failures automatically.

They allow for parallel processing across a cluster of machines, enabling distributed data processing. They can be created from data stored in Hadoop Distributed File System (HDFS), local file systems, or by transforming existing RDD.

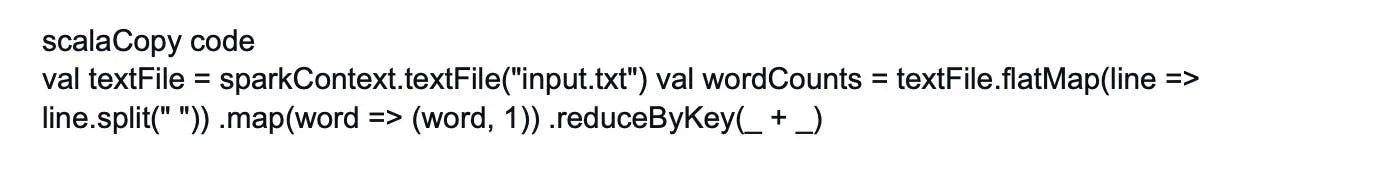

3. What are the different ways to create RDDs in Spark?

There are three main ways to create RDDs in Spark:

- By parallelizing an existing collection in the driver program using the parallelize() method.

- By referencing a dataset in an external storage system, such as Hadoop Distributed File System (HDFS), using the textFile() method.

- By transforming existing RDDs through operations like map(), filter(), or reduceByKey().

4. How does Spark handle fault tolerance?

Spark achieves fault tolerance through RDDs. RDDs are resilient because they track the lineage of transformations applied to a base dataset. Whenever a partition of an RDD is lost, Spark can automatically reconstruct the lost partition by reapplying the transformations from the original dataset. This lineage information allows Spark to handle failures and ensure fault tolerance without requiring the explicit replication of data.

5. Describe the difference between transformations and actions in Spark.

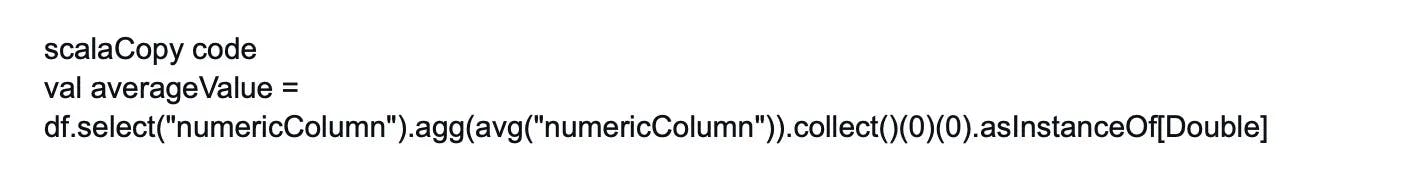

Transformations in Spark are operations that produce a new RDD from an existing one such as map(), filter(), or reduceByKey(). Transformations are lazy, meaning they are not executed immediately but rather recorded as a sequence of operations to be performed when an action is called.

Actions in Spark trigger the execution of transformations and return results to the driver program or write data to an external storage system. Examples of actions include count(), collect(), or saveAsTextFile(). Actions are eager and cause the execution of all previously defined transformations in order to compute a result.

6. What are your thoughts on DStreams in Spark?

You will often come across this Spark coding interview question. A Discretized Stream (DStream) is the rudimentary abstraction in Spark Streaming and is a continuous succession of RDDs. These RDD sequences are all of the same types and represent a continuous stream of data. Every RDD holds information from a specified time interval.

DStreams in Spark accepts input from a variety of sources, including Kafka, Flume, Kinesis, and TCP connections. It can also be used to generate a data stream by transforming the input stream. It helps developers by providing a high-level API and fault tolerance.

7. What are the various cluster managers that are available in Apache Spark?

This is a common Spark interview question. The cluster managers are:

Standalone Mode: The standalone mode cluster executes applications in FIFO order by default, with each application attempting to use all available nodes. You can manually start a standalone cluster by manually starting a master and workers. It is also possible to test these daemons on a single system.

Apache Mesos: Apache Mesos is an open-source project that can run Hadoop applications as well as manage computer clusters. The benefits of using Mesos to deploy Spark include dynamic partitioning between Spark and other frameworks, as well as scalable partitioning across several instances of Spark.

Hadoop YARN: Apache YARN is Hadoop 2's cluster resource manager. Spark can also be run on YARN.

Kubernetes: Kubernetes is an open-source solution for automating containerized application deployment, scaling, and management.

8. What makes Spark so effective in low-latency applications like graph processing and machine learning?

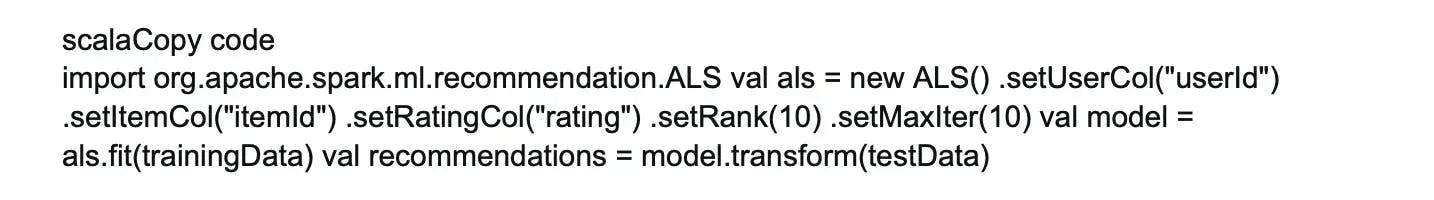

Apache Spark caches data in memory to allow for faster processing and the development of machine learning models. To construct an optimal model, machine learning algorithms require several iterations and distinct conceptual processes. To construct a graph, graph algorithms explore all of the nodes and edges. These low-latency workloads that necessitate repeated iterations can result in improved performance.

9. What precisely is a Lineage Graph?

This is another common Spark interview question. A Lineage Graph is a graph of dependencies between an existing RDD and a new RDD. It means that instead of the original data, all of the dependencies between the RDD will be represented in a graph.

An RDD lineage graph is required when we want to calculate a new RDD or recover lost data from a persisted RDD that has been lost. Spark does not support in-memory data replication. As a result, if any data is lost, it can be recreated using RDD lineage. It's sometimes referred to as an RDD operator graph or an RDD dependency graph.

10. What is lazy evaluation in Spark?

Lazy evaluation in Spark means that transformations on RDDs are not executed immediately. Instead, Spark records the sequence of transformations applied to an RDD and builds a directed acyclic graph (DAG) representing the computation. This approach allows Spark to optimize and schedule the execution plan more efficiently. The transformations are evaluated lazily only when an action is called and the results are needed.

11. Explain the role of a Spark driver program.

The Spark driver program is the main program that defines the RDDs, transformations, and actions to be executed on a Spark cluster. It runs on the machine where the Spark application is submitted and is responsible for creating the SparkContext, which establishes a connection to the cluster manager. The driver program coordinates the execution of tasks on the worker nodes and collects the results from the distributed computations.

12. What is a Spark executor?

A Spark executor is a process launched on worker nodes in a Spark cluster. Executors are responsible for executing tasks assigned by the driver program. Each executor runs multiple tasks concurrently and manages the memory and storage resources allocated to those tasks.

Executors communicate with the driver program and coordinate with each other to process data in parallel.

13. Are Checkpoints provided by Apache Spark?

You will often come across this Spark coding interview question. Yes, there is an API for adding and managing checkpoints in Apache Spark. The practice of making streaming applications resilient to errors is known as checkpointing. It lets you save data and metadata to a checkpointing directory. In the event of a failure, Spark can recover this data and resume where it left off.

Checkpointing in Spark can be used for two sorts of data.

- Checkpointing Metadata: Metadata is data about data. It refers to storing the metadata in a fault-tolerant storage system such as HDFS. Configurations, DStream actions, and incomplete batches are all examples of metadata.

- Data Checkpointing: In this case, we store the RDD in a reliable storage location because it is required by some of the stateful transformations.

14. What role do accumulators play in Spark?

Accumulators are variables that are used to aggregate information between executors. This information can be about the data or an API diagnosis, such as how many damaged records there are or how many times a library API was called.

15. What are the benefits of Spark's in-memory computation?

Spark's in-memory computation offers several benefits:

Faster processing: By keeping data in memory, Spark avoids the disk I/O bottleneck of traditional disk-based processing which results in significantly faster execution times.

Iterative and interactive processing: In-memory computation allows for efficient iterative algorithms and interactive data exploration as intermediate results can be cached in memory.

Simplified programming model: Developers can use the same programming APIs for batch, interactive, and real-time processing without worrying about data serialization/deserialization or disk I/O.

16. Explain the concept of caching in Spark Streaming.

Caching, often known as Persistence, is a strategy for optimizing Spark calculations. DStreams, like RDDs, allow developers to store the stream's data in memory. That is, calling the persist() method on a DStream will automatically keep all RDDs in that DStream in memory. It is beneficial to store interim partial results so that they can be reused in later stages.

For input streams that receive data via the network, the default persistence level is set to replicate the data to two nodes for fault tolerance.

17. Define shuffling in Spark.

The process of dispersing data across partitions, which may result in data migration across executors, is known as shuffling. When opposed to Hadoop, Spark does the shuffle process differently.

Shuffling has 2 important compression parameters:

- Spark.shuffle.compress – checks whether the engine would compress shuffle outputs

- Spark.shuffle.spill.compress – decides whether to compress intermediate shuffle spill files or not.

18. Describe the concept of a shuffle operation in Spark.

A shuffle operation in Spark refers to the process of redistributing data across partitions, typically performed when there is a change in the partitioning of data. It involves a data exchange between different nodes in the cluster as records need to be shuffled to their appropriate partitions based on a new partitioning scheme. Shuffles are expensive operations in terms of network and disk I/O, and they can impact the performance of Spark applications.

19. What are the many features that Spark Core supports?

You will often come across this Spark coding interview question. Spark Core is the engine that handles huge data sets in parallel and distributed mode. Spark Core provides the following functionalities:

- Job scheduling and monitoring

- Memory management

- Fault detection and recovery

- Interacting with storage systems

- Task distribution, etc.

20. How can you persist RDDs in Spark?

RDDs in Spark can be persisted in memory or on disk using the persist() or cache() methods. When an RDD is persisted, its partitions are stored in memory or on disk, depending on the storage level specified. Persisting RDDs allows for faster access and reusability of intermediate results across multiple computations, reducing the need for recomputation.

21. What is the significance of SparkContext in Spark?

SparkContext is the entry point for any Spark functionality in a Spark application. It represents the connection to a Spark cluster and enables the execution of operations on RDDs.

SparkContext provides access to various configuration options, cluster managers, and input/output functions. It is responsible for coordinating the execution of tasks on worker nodes and communicating with the cluster manager.

22. How can you monitor the progress of a Spark application?

Spark provides various mechanisms to monitor the progress of a Spark application:

Spark web UI: Spark automatically starts a web UI that provides detailed information about the application including job progress, stages, tasks, and resource usage.

Logs: Spark logs detailed information about the application's progress, errors, and warnings. These logs can be accessed and analyzed to monitor the application.

Cluster manager interfaces: If Spark is running on a cluster manager like YARN (Yet Another Resource Negotiator) or Mesos, their respective UIs can provide insights into the application's progress and resource utilization.

23. What is the difference between Apache Spark and Apache Flink?

Apache Spark and Apache Flink are both distributed data processing frameworks, but they have some differences:

Processing model: Spark is primarily designed for batch and interactive processing, while Flink is designed for both batch and stream processing. Flink offers built-in support for event time processing and provides more advanced windowing and streaming capabilities.

Data processing APIs: Spark provides high-level APIs, including RDDs, DataFrames, and Datasets, which abstract the underlying data structures. Flink provides a unified streaming and batch API called the DataStream API that offers fine-grained control over time semantics and event processing.

Fault tolerance: Spark achieves fault tolerance through RDD lineage, while Flink uses a mechanism called checkpointing that periodically saves the state of operators to enable recovery from failures.

Memory management: Spark's in-memory computation is based on resilient distributed datasets (RDDs), whereas Flink uses a combination of managed memory and disk-based storage to optimize the usage of memory.

24. Explain the concept of lineage in Spark.

Lineage in Spark refers to the history of the sequence of transformations applied to an RDD. Spark records the lineage of each RDD which defines how the RDD was derived from its parent RDDs through transformations.

By maintaining this lineage information, Spark can automatically reconstruct lost partitions of an RDD by reapplying the transformations from the original data. This ensures fault tolerance and data recovery.

25. What is the significance of the Spark driver node?

The Spark driver node is responsible for executing the driver program which defines the RDDs, transformations, and actions to be performed on the Spark cluster. It runs on the machine where the Spark application is submitted.

It communicates with the cluster manager to acquire resources and coordinate the execution of tasks on worker nodes. The driver node collects the results from the distributed computations and returns them to the user or writes them to an external storage system.

26. How can you handle missing data in Spark DataFrames?

In Spark DataFrames, missing or null values can be handled using various methods:

Dropping rows: You can drop rows containing missing values using the drop() method.

Filling missing values: You can fill missing values with a specific default value using the fillna() method.

Imputing missing values: Spark provides functions like Imputer that can replace missing values with statistical measures, such as mean, median, or mode, based on other non-missing values in the column.

27. Describe the concept of serialization in Spark.

Serialization in Spark refers to the process of converting objects into a byte stream to be transmitted over the network or stored in memory or disk. Spark uses efficient serialization frameworks like Java's ObjectOutputStream or the more optimized Kryo serializer.

Serialization is crucial in Spark's distributed computing model as it allows objects, partitions, and closures to be sent across the network and executed on remote worker nodes.

28. What are the advantages of using Spark over traditional MapReduce?

Spark offers several advantages over traditional MapReduce:

In-memory computation: Spark performs computations in-memory, significantly reducing disk I/O and improving performance.

Faster data processing: Spark's DAG execution engine optimizes the execution plan and provides a more efficient processing model, resulting in faster data processing.

Rich set of libraries: Spark provides a wide range of libraries for machine learning (MLlib), graph processing (GraphX), and real-time stream processing (Spark Streaming) to enable diverse data processing tasks.

Interactive and iterative processing: Spark supports interactive queries and iterative algorithms, allowing for real-time exploration and faster development cycles.

Fault tolerance: Spark's RDD lineage provides automatic fault tolerance and data recovery, eliminating the need for explicit data replication.

29. How does Spark handle data partitioning?

Spark handles data partitioning by distributing the data across multiple partitions in RDDs or DataFrames. Data partitioning allows Spark to process the data in parallel across a cluster of machines.

Spark provides control over partitioning through partitioning functions or by specifying the number of partitions explicitly. It also performs automatic data partitioning during shuffle operations to optimize data locality and parallelism.

30. Explain the difference between local and cluster modes in Spark.

In local mode, Spark runs on a single machine - typically the machine where the driver program is executed. The driver program and Spark worker tasks run within the same JVM, enabling local parallelism on multiple cores or threads.

In cluster mode, Spark is deployed on a cluster of machines with the driver program running on one machine (driver node) and Spark workers executing tasks on other machines (worker nodes). The driver program coordinates the execution of tasks on the worker nodes. Data is distributed across the cluster for parallel processing.

31. What is the role of SparkContext and SQLContext in Spark?

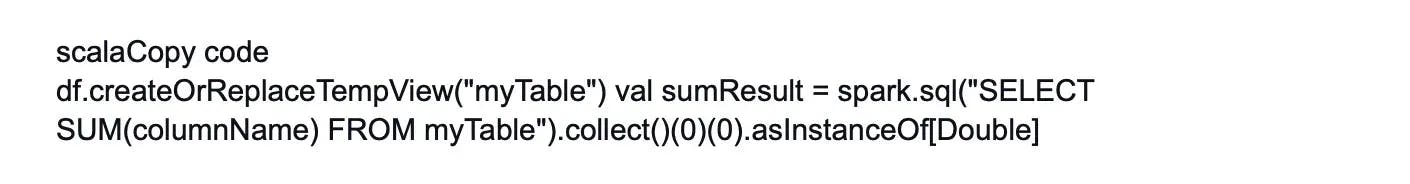

SparkContext is the entry point for any Spark functionality and represents the connection to a Spark cluster. It allows the creation of RDDs, defines configuration options, and coordinates the execution of tasks on the cluster.

SQLContext is a higher-level API that provides a programming interface for working with structured data using Spark's DataFrame and Dataset APIs. It extends the functionality of SparkContext by enabling the execution of SQL queries, reading data from various sources, and performing relational operations on distributed data.

32. How can you handle skewed data in Spark?

To handle skewed data in Spark, you can use techniques like:

Data skew detection: Identify skewed keys or partitions by analyzing the data distribution. Techniques like sampling, histograms, or analyzing partition sizes can help in detecting skewed data.

Skew join handling: For skewed joins, you can use techniques like data replication, where you replicate the data of skewed keys/partitions to balance the load. Alternatively, you can use broadcast joins for smaller skewed datasets.

Data partitioning: Adjusting the partitioning scheme can help distribute the skewed data more evenly. Custom partitioning functions or bucketing can be used to redistribute the data.

33. Describe the difference between narrow and wide transformations in Spark.

In Spark, narrow transformations are operations where each input partition contributes to only one output partition. Narrow transformations are performed locally on each partition without the need for data shuffling across the network. Examples of narrow transformations include map(), filter(), and union().

Wide transformations, on the other hand, are operations that require data shuffling across partitions. They involve operations like grouping, aggregating, or joining data across multiple partitions. Wide transformations result in a change in the number of partitions and often require network communication. Examples of wide transformations are groupByKey(), reduceByKey(), and join().

34. What is the purpose of the Spark Shell?

The Spark Shell is an interactive command-line tool that allows users to interact with Spark and prototype code quickly. It provides a convenient environment to execute Spark commands and write Spark applications interactively.

The Spark Shell supports both Scala and Python programming languages and offers a read-evaluate-print-loop (REPL) interface for interactive data exploration and experimentation.

35. Explain the concept of RDD lineage in Spark.

RDD lineage in Spark refers to the sequence of operations and dependencies that define how an RDD is derived from its source data or parent RDDs. Spark maintains the lineage information for each RDD, signifying the transformations applied to the original data.

This lineage information enables fault tolerance as Spark can reconstruct lost partitions by reapplying the transformations. It also allows for efficient recomputation and optimization of RDDs during execution.

36. What are the different storage levels available in Spark?

Spark provides several storage levels to manage the persistence of RDDs in memory or on disk. The storage levels include:

MEMORY_ONLY: Stores RDD partitions in memory.

MEMORY_AND_DISK: Stores RDD partitions in memory and spills to disk if necessary.

MEMORY_ONLY_SER: Stores RDD partitions in memory as serialized objects.

MEMORY_AND_DISK_SER: Stores RDD partitions in memory as serialized objects and spills to disk if necessary.

DISK_ONLY: Stores RDD partitions only on disk.

OFF_HEAP: Stores RDD partitions off-heap in serialized form.

37. How does Spark handle data serialization and deserialization?

Spark uses a pluggable serialization framework to handle data serialization and deserialization. The default serializer in Spark is Java's ObjectOutputStream, but it also provides the more efficient Kryo serializer.

By default, Spark automatically chooses the appropriate serializer based on the data and operations being performed. Developers can also customize serialization by implementing custom serializers or using third-party serialization libraries.

38. Describe the process of working with CSV files in Spark.

When working with CSV files in Spark, you can use the spark.read.csv() method to read the CSV file and create a DataFrame. Spark infers the schema from the data and also allows you to provide a schema explicitly. You can specify various options like delimiter, header presence, and handling of null values.

Once the CSV file is read into a DataFrame, you can apply transformations and actions on the DataFrame to process and analyze the data. You can also write the DataFrame back to CSV format using the df.write.csv() method.

39. What is the significance of the Spark Master node?

The Spark Master node is the entry point and the central coordinator of a Spark cluster. It manages the allocation of resources, such as CPU and memory, to Spark applications running on the cluster.

The Master node maintains information about available worker nodes, monitors their health, and schedules tasks to be executed on the workers. It also provides a web UI and APIs to monitor the cluster and submit Spark applications.

40. How can you enable dynamic allocation in Spark?

Dynamic allocation in Spark allows for the dynamic acquisition and release of cluster resources based on the workload. To enable dynamic allocation, you need to set the following configuration properties:

spark.dynamicAllocation.enabled: Set this property to true to enable dynamic allocation.

spark.dynamicAllocation.minExecutors and spark.dynamicAllocation.maxExecutors: Set the minimum and maximum number of executors that Spark can allocate dynamically based on the workload.

With dynamic allocation enabled, Spark automatically adjusts the number of executors based on the resource requirements and the availability of resources in the cluster.

41. Explain the purpose of Spark's DAG scheduler.

Spark's DAG (Directed Acyclic Graph) scheduler is responsible for transforming the logical execution plan of a Spark application into a physical execution plan. It analyzes the dependencies between RDDs and transformations and optimizes the execution plan by combining multiple transformations into stages and optimizing data locality.

The DAG scheduler breaks down the execution plan into stages, which are then scheduled and executed by the cluster manager.

42. What is the difference between cache() and persist() methods in Spark?

Both cache() and persist() methods in Spark allow you to persist RDDs or DataFrames in memory or on disk. The main difference lies in the default storage level used:

cache(): This method is a shorthand for persist(MEMORY_ONLY) and stores the RDD or DataFrame partitions in memory by default.

persist(): This method allows you to specify the storage level explicitly. You can choose from different storage levels, such as MEMORY_ONLY, MEMORY_AND_DISK or DISK_ONLY, based on your requirements.

Both methods provide the same functionality of persisting the data. You can specify the storage level for persist() to achieve the same behavior as cache().

43. Describe the process of working with text files in Spark.

To work with text files in Spark, you can use the spark.read.text() method to read the text file and create an RDD or DataFrame with each line as a separate record. Spark provides various options to handle text file formats such as compressed files, multi-line records, and encoding.

Once the text file is read, you can apply transformations and actions on the RDD or DataFrame to process and analyze the data. You can also write the RDD or DataFrame back to text format using the saveAsTextFile() method.

44. What is the role of the Spark worker node?

The Spark worker node is responsible for executing tasks assigned by the driver program. It runs on the worker machines in the Spark cluster and manages the execution of tasks in parallel. Each worker node has its own executor(s) and is responsible for executing a portion of the overall workload.

The worker nodes communicate with the driver program and perform the actual computation and data processing tasks assigned to them.

45. How can you handle skewed keys in Spark SQL joins?

To handle skewed keys in Spark SQL joins, you can use techniques like:

Replication: Identify the skewed keys and replicate the data of those keys across multiple partitions to balance the load.

Broadcast join: Use the broadcast join technique for smaller skewed datasets.

Custom partitioning: Apply custom partitioning techniques to redistribute the data and reduce the skew, if possible.

46. Explain the concept of Spark's standalone mode.

Spark's standalone mode is a built-in cluster manager provided by Spark itself. Here, Spark cluster resources are managed directly by the Spark cluster manager without relying on external cluster management systems like YARN or Mesos. It allows you to run Spark applications on a cluster of machines by starting a master and multiple worker nodes.

Wrapping up

These questions cover a range of topics that allow you to demonstrate your Spark knowledge and competence. They will help you be better prepared for your interview and boost your chances of success. Practicing coding can consolidate your comprehension of Spark topics.

If you're a hiring manager looking for Spark experts, Turing can connect you with top talent from around the globe. Our AI-powered talent cloud enables Silicon Valley organizations to quickly enroll pre-vetted software developers in a few clicks.

Hire Silicon Valley-caliber Apace Spark developers at half the cost

Turing helps companies match with top quality remote JavaScript developers from across the world in a matter of days. Scale your engineering team with pre-vetted JavaScript developers at the push of a buttton.

Tired of interviewing candidates to find the best developers?

Hire top vetted developers within 4 days.

Leading enterprises, startups, and more have trusted Turing

Check out more interview questions

Hire remote developers

Tell us the skills you need and we'll find the best developer for you in days, not weeks.